In the ever-evolving landscape of AI-generated imagery, one innovation stands out for its ability to grant artists and creators an unprecedented level of control: ControlNet Pose. This groundbreaking technique has revolutionized how we interact with generative models, allowing users to dictate the exact positioning, posture, and even subtle nuances of subjects within their digital compositions. Whether you’re a seasoned digital artist, a hobbyist experimenting with AI tools, or a developer pushing the boundaries of creative technology, understanding ControlNet Pose is akin to unlocking a hidden dimension of artistic expression.

Imagine sculpting a figure in a 3D modeling software, but instead of painstakingly adjusting vertices and bones, you simply sketch a pose or upload a reference image, and the AI does the heavy lifting. That’s the magic of ControlNet Pose—it bridges the gap between human intent and machine execution with remarkable precision. But how does it work? What types of content can you create with it? And why is it becoming an indispensable tool in the AI artistry toolkit? Let’s dive deep into the mechanics, applications, and creative possibilities of this transformative technology.

The Science Behind ControlNet Pose: How It Transforms AI Artistry

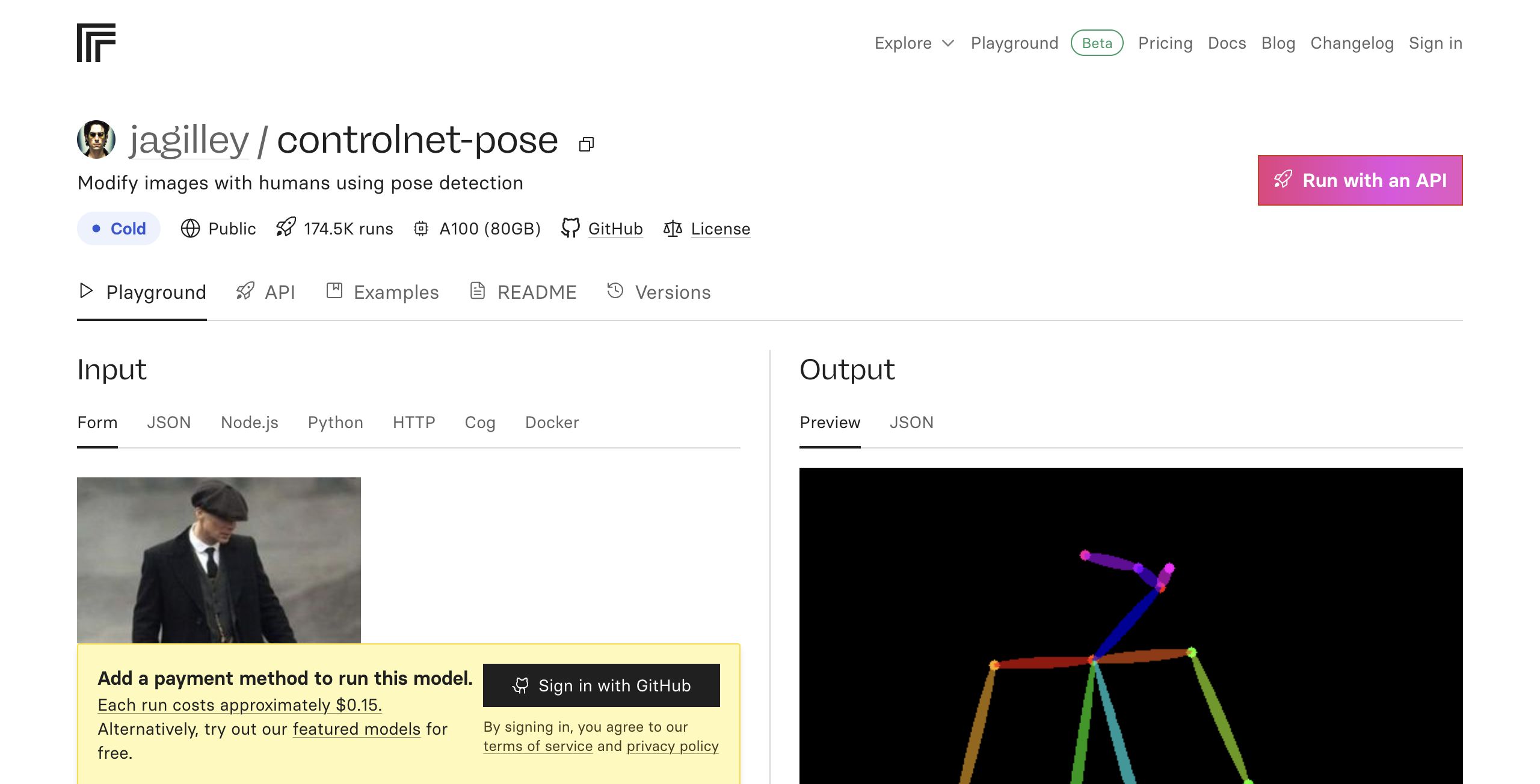

At its core, ControlNet Pose leverages a neural network architecture designed to interpret and replicate human poses with astonishing accuracy. Unlike traditional generative models that rely solely on text prompts, ControlNet Pose introduces an additional layer of input: pose data. This data can take multiple forms—skeletal keypoints, depth maps, or even rough sketches—each serving as a blueprint for the AI to follow.

The process begins with a pre-trained diffusion model, which generates images based on textual descriptions. However, when paired with ControlNet, the model gains an auxiliary input: a pose condition. This condition acts as a constraint, guiding the generation process to ensure the output aligns with the desired posture. For instance, if you provide a pose reference of a dancer mid-leap, the AI will prioritize generating an image that captures that dynamic movement, even if the prompt is as vague as “a person in motion.”

The beauty of this approach lies in its adaptability. ControlNet Pose isn’t limited to static figures; it can handle complex sequences, multiple subjects, and even abstract interpretations of poses. By fine-tuning the model with pose-specific datasets, developers have created systems that understand not just the geometry of a pose but also its emotional and stylistic implications. A slouched figure might evoke melancholy, while an outstretched arm could signify triumph—ControlNet Pose translates these subtleties into visual form.

Moreover, the integration of pose control doesn’t compromise the model’s creativity. Instead, it channels that creativity within defined parameters, ensuring that the generated content remains coherent and intentional. This balance between control and freedom is what makes ControlNet Pose a game-changer for artists who seek both precision and spontaneity in their work.

From Static Poses to Dynamic Sequences: Exploring Content Types

The versatility of ControlNet Pose opens the door to a vast array of content types, each catering to different creative needs and technical challenges. Here’s a breakdown of the most compelling categories you can explore:

1. Character Design and Pose Libraries

For character artists and illustrators, ControlNet Pose is a treasure trove. Instead of manually sketching dozens of poses for a single character, you can generate a diverse library with minimal effort. Need a knight in a defensive stance? A ballerina in a pirouette? A superhero mid-flight? Simply input the pose reference, and the AI delivers a range of options tailored to your specifications. This not only saves time but also inspires new ideas by presenting poses you might not have considered.

2. Fashion and Apparel Visualization

Fashion designers and stylists are leveraging ControlNet Pose to visualize clothing designs on models without the need for photoshoots. By inputting a pose reference, they can generate realistic or stylized renderings of garments draped on virtual models, complete with fabric textures and lighting effects. This allows for rapid prototyping and experimentation, reducing the cost and time associated with traditional fashion photography. Imagine testing how a dress flows in a twirl or how a jacket drapes over a seated figure—all within seconds.

3. Animation and Storyboarding

Animators and storyboard artists are finding ControlNet Pose invaluable for planning scenes and sequences. By generating keyframes based on pose references, they can quickly sketch out the flow of movement in a scene before diving into detailed animation. This is particularly useful for complex action sequences, where maintaining consistency in character poses is crucial. Directors can also use it to pre-visualize fight scenes, dance routines, or even subtle character interactions, ensuring that the final animation aligns with their vision.

4. Fitness and Wellness Content

The fitness industry is another domain where ControlNet Pose shines. Personal trainers, yoga instructors, and physiotherapists are using it to create instructional content with precise pose demonstrations. Whether it’s a yoga asana, a weightlifting form, or a rehabilitation exercise, the AI can generate clear, anatomically accurate visuals that serve as guides for students and clients. This technology is democratizing access to high-quality educational material, making it easier for people to learn and practice safely.

5. Game Development and Virtual Worlds

Game developers are integrating ControlNet Pose into their pipelines to streamline character animation and asset creation. By generating pose libraries for NPCs (non-player characters), they can populate virtual worlds with diverse and lifelike figures without manually animating each one. This is especially useful for open-world games, where the sheer volume of character interactions demands efficient solutions. Additionally, indie developers can use ControlNet Pose to prototype game mechanics, testing how characters move and interact in different scenarios before committing to full production.

6. Artistic and Abstract Interpretations

Not all applications of ControlNet Pose are literal. Artists are using it to push the boundaries of abstraction, creating surreal and dreamlike compositions where poses are distorted, exaggerated, or merged with other elements. For example, a figure might be rendered with elongated limbs that defy physics, or a pose might be blended with natural landscapes to create a fantastical scene. This fusion of control and creativity allows for experimental art that challenges conventional perceptions of form and movement.

Mastering ControlNet Pose: Tips and Techniques for Optimal Results

While ControlNet Pose is a powerful tool, achieving professional-grade results requires a nuanced understanding of its capabilities and limitations. Here are some advanced tips to help you harness its full potential:

1. Refining Pose References

The quality of your pose reference directly impacts the output. For best results, use high-resolution images with clear, well-defined keypoints. If you’re working with a sketch or a rough drawing, ensure the proportions and angles are accurate to avoid distortions in the final image. Tools like OpenPose or MediaPipe can help you extract precise pose data from reference images, which you can then feed into ControlNet.

2. Balancing Prompts and Pose Control

ControlNet Pose works best when combined with descriptive prompts. While the pose provides the structural framework, the prompt adds context, style, and detail. For example, a prompt like “a cyberpunk samurai in a dynamic battle stance, neon lights, cinematic lighting” will yield a far more compelling result than a generic prompt. Experiment with different combinations to find the sweet spot between control and creativity.

3. Iterative Refinement

Don’t expect perfection on the first try. ControlNet Pose often requires iterative refinement. Start with a broad pose reference and gradually adjust the details—such as hand positioning, facial expressions, or clothing folds—to achieve the desired effect. Many users find it helpful to generate multiple variations and then select the best elements from each to composite a final image.

4. Leveraging Style Transfer

For artists who want to maintain a consistent artistic style, style transfer techniques can be combined with ControlNet Pose. By training the model on a specific art style—such as anime, watercolor, or photorealism—you can generate poses that align with your preferred aesthetic. This is particularly useful for creating cohesive series or collections where uniformity is key.

5. Exploring Multi-Pose Generation

ControlNet Pose isn’t limited to single-subject images. Advanced users can experiment with multi-pose generation, where multiple figures interact within a single composition. This requires careful planning to ensure the poses are coherent and the spatial relationships between subjects are accurate. Techniques like depth estimation and occlusion handling can help create more realistic multi-subject scenes.

The Future of ControlNet Pose: Where Art and AI Converge

As AI continues to evolve, the applications of ControlNet Pose are poised to expand even further. Emerging trends suggest a future where pose control becomes seamlessly integrated into real-time creative workflows, enabling artists to manipulate and refine their work on the fly. Imagine a digital painting application where you sketch a pose, and the AI dynamically adjusts the character’s posture in response to your brushstrokes. Or a virtual reality environment where you can physically pose a 3D model, and the AI translates your movements into a digital sculpture.

Moreover, the integration of ControlNet Pose with other AI technologies—such as text-to-image models, 3D rendering engines, and even brain-computer interfaces—could unlock entirely new forms of artistic expression. The line between creator and tool is blurring, giving rise to a collaborative ecosystem where humans and AI co-create in ways previously unimaginable.

For now, ControlNet Pose stands as a testament to the power of human-AI collaboration. It empowers artists to transcend technical limitations, explore uncharted creative territories, and bring their visions to life with unprecedented precision. Whether you’re a digital artist, a designer, a developer, or simply someone who loves to experiment with AI, mastering ControlNet Pose is your gateway to a world where the only limit is your imagination.

As you embark on your journey with this transformative technology, remember that the most compelling creations often emerge from the intersection of control and spontaneity. Embrace the precision of pose control, but don’t shy away from the serendipitous moments where the AI surprises you with its own creative flair. In the realm of ControlNet Pose, the future isn’t just about following a script—it’s about co-authoring one.

Leave a Comment